Abstract

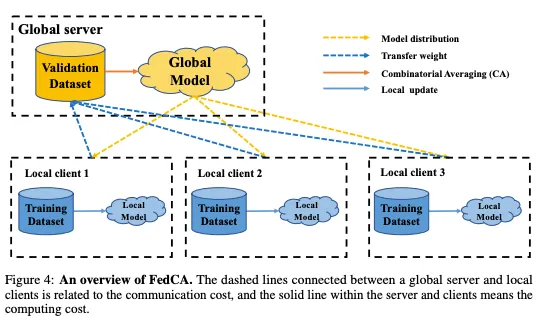

Federated learning has emerged as an innovative paradigm of collaborative machine learning. Unlike conventional machine learning, a global model is collaboratively learned while data remains distributed over a tremendous number of client devices, thus not compromising user privacy. However, several challenges still remain despite its glowing popularity; above all, the global aggregation in federated learning involves the challenge of biased model averaging and lack of prior knowledge in client sampling, which, in turn, leads to high generalization error and slow convergence rate, respectively. In this work, we propose a novel algorithm called FedCM

that addresses the two challenges by utilizing prior knowledge with multi-armed bandit-based client sampling and filtering biased models with combinatorial model averaging. Based on extensive evaluations using various algorithms and representative heterogeneous datasets, we showed that FedCM significantly outperformed the state-of-the-art algorithms by up to 37.25% and 4.17 times, respectively, in terms of generalization accuracy and convergence rate.