Abstract

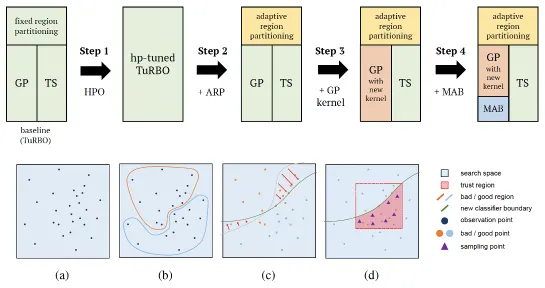

In machine learning algorithms, the choice of the hyperparameter is often an art more than a science, requiring a labor-intensive search with expert experience. Therefore, automation on hyperparameter optimization to exclude human intervention is a great appeal, especially for the black-box functions. Recently, there have been increasing demands for solving such concealed tasks for better generalization, though the task-dependent issue is not easy to solve. The Black-Box Optimization challenge (NeurIPS 2020) required competitors to build a robust black-box optimizer across different domains of standard machine learning problems. This paper describes the approach of team KAIST OSI in a step-wise manner, which outperforms the baseline algorithms by up to +20.39%. We first strengthen the local Bayesian search under the concept of region reliability. Then, we design a combinatorial kernel for a Gaussian process kernel. In a similar vein, we combine the methodology of the Bayesian and multi-armed bandit,(MAB) approach to select the values with the consideration of the variable types; the real and integer variables are with Bayesian, while the boolean and categorical variables are with MAB. Empirical evaluations demonstrate that our method outperforms the existing methods across different tasks.