Abstract

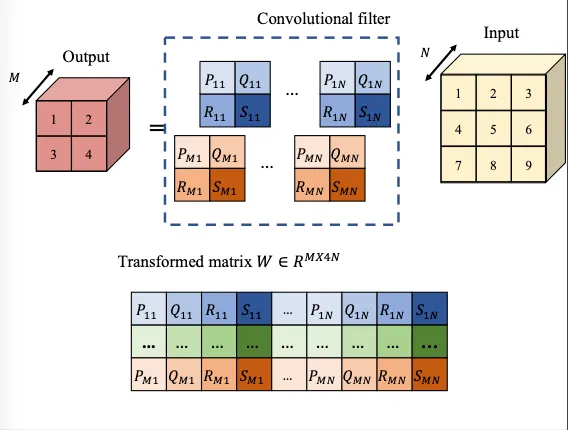

Recent research in deep Convolutional Neural Networks (CNN) faces the challenges of vanishing/exploding gradient issues, training instability, and feature redundancy. Orthogonality Regularization (OR), which introduces a penalty function considering the orthogonality of neural networks, could be a remedy to these challenges but is surprisingly not popular in the literature. This work revisits the OR approaches and empirically answers the question: Even when comparing various regularizations like weight decay and spectral norm regularization, which is the most powerful OR technique? We begin by introducing the improvements of various regularization techniques, specifically focusing on OR approaches over a variety of architectures. After that, we disentangle the benefits of OR in the comparison of other regularization approaches with a connection on how they affect norm preservation effects and feature redundancy in the forward and backward propagation. Our investigations show that Kernel Orthogonality Regularization\,(KOR) approaches, which directly penalize the orthogonality of convolutional kernel matrices, consistently outperform other techniques. We propose a simple KOR method considering both row- and column- orthogonality, of which empirical performance is the most effective in mitigating the aforementioned challenges. We further discuss several circumstances in the recent CNN models on various benchmark datasets, wherein KOR gains more effectiveness.